Nvidia moved quickly to calm investor nerves during its earnings call on Wednesday.

The chipmaker delivered another blowout earnings report that underscored how little momentum the AI boom has lost. As the world’s most valuable company by market capitalization, Nvidia topped Wall Street expectations across the board in its fiscal fourth quarter and issued a forecast that sailed past analyst estimates.

The upbeat results arrive at a delicate moment for AI-linked stocks, which have recently shown signs of fatigue.

From incorporating Groq into Nvidia systems to an update on the new Vera Rubin chips, here are the biggest takeaways from Nvidia’s fourth-quarter earnings call.

1. Nvidia is becoming the backbone of Big AI

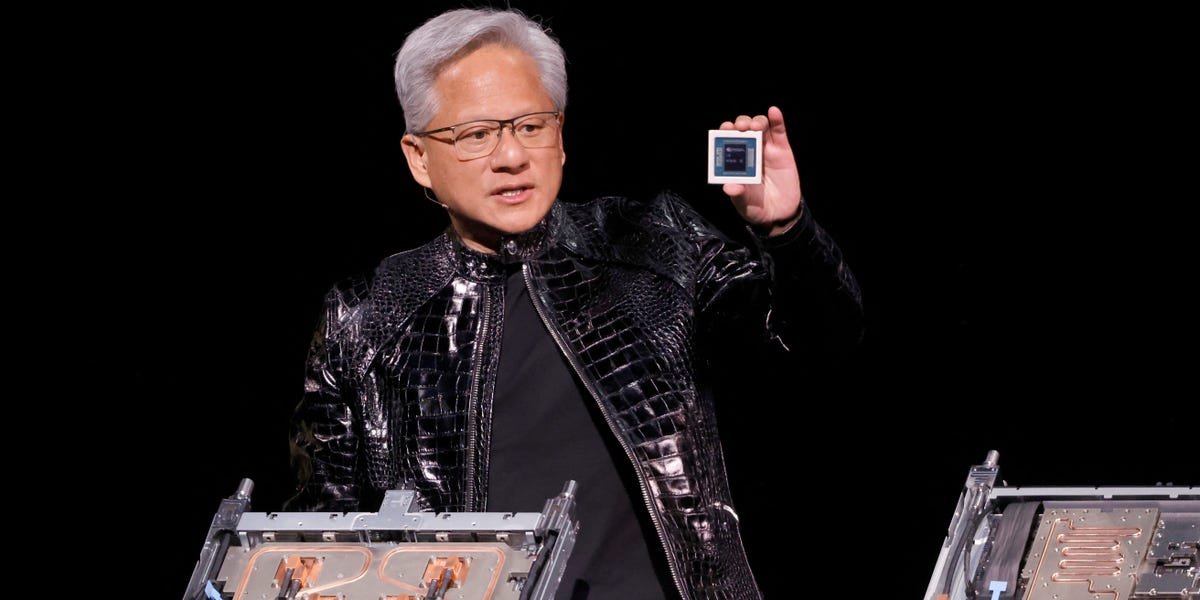

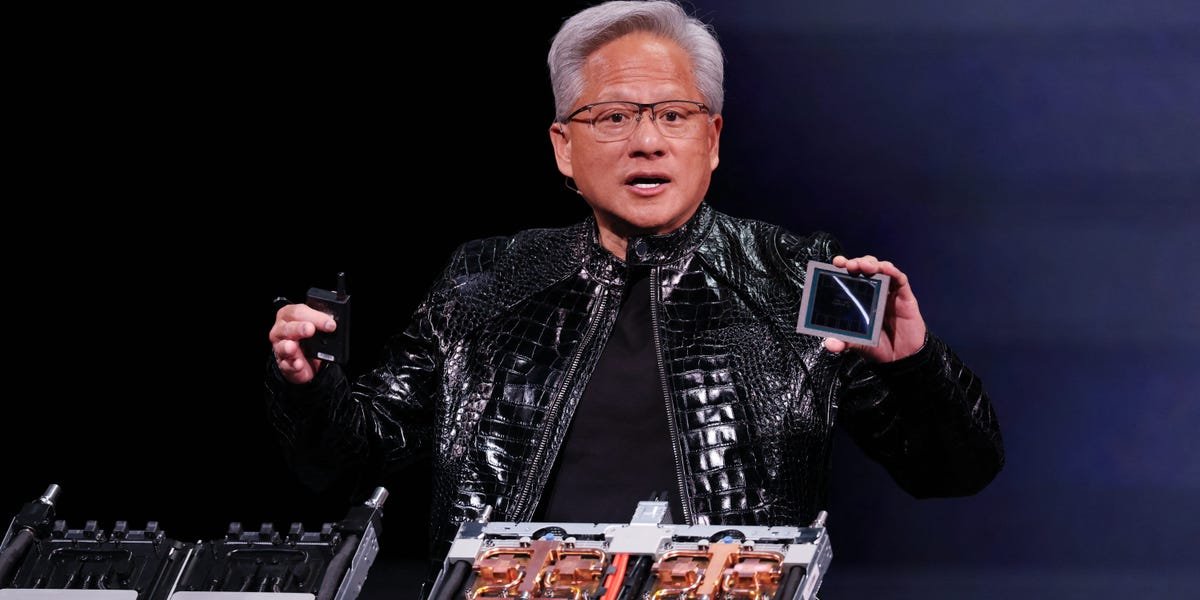

Over the course of the call, CEO Jensen Huang repeatedly positioned Nvidia at the center of the AI industry’s biggest players.

OpenAI’s latest Codex model is trained and runs on Nvidia’s Blackwell systems, and the companies are close to reaching a multibillion-dollar partnership, he said.

Meta is deploying Nvidia GPUs in its push toward superintelligence, and Nvidia also announced an up to $10 billion investment in Anthropic.

Huang said his goal is to ensure that every form of AI — from large language models to robotics — is built on its platform.

“We want to take the great opportunity that we have as we’re in the beginning of this new computing era, this new computing platform shift, to put everybody on Nvidia,” he said.

2. Huang teases Groq integration as AI shifts to inference

When asked about Nvidia’s future road map and whether it plans to build customized chips for specific workloads, Huang said the company prefers to keep as much as possible within a single design.

That said, he teased a potentially significant move involving Groq, saying more details would come at Nvidia’s GTC conference in March.

Late last year, Nvidia struck a non-exclusive licensing agreement with Groq for its low-latency AI inference technology — a deal that also brought Groq’s founder and other top engineers on board.

“What we’ll do is we’ll extend our architecture with Groq as an accelerator in very much the ways that we extended Nvidia’s architecture with Mellanox,” Huang said, referring to the networking company Nvidia acquired in 2020.

As AI workloads shift from training large models to running them, the move suggests Nvidia isn’t going to abandon its core platform but rather fold specialized inference capabilities in.

3. Samples of the Vera Rubin chips have been shipped

Nvidia has begun shipping early samples of its next-generation Vera Rubin chips to customers.

Chief Financial Officer Colette Kress said during the earnings call that the company delivered “our first Vera Rubin samples” earlier this week and expects broader shipments of the new chips to begin in the second half of 2026.

“We expect every cloud model builder to deploy Vera Rubin,” Kress said.

Huang previously said at the Consumer Electronics Show in January that compared to the Blackwell model, Rubin has more than triple the speed, could run inference five times faster, and can deliver significantly more inference compute per watt of energy.

4. Addressing future risks

Nvidia appears concerned about whether there will be enough resources to sustain the demand for data centers.

In its latest 10-K report filed with the Securities and Exchange Commission, Nvidia listed the availability of data centers, energy, and capital to support the data center buildout as a risk factor, writing that “any shortage of these and other necessary resources could impact our future revenue and financial performance.”

“Expanding energy capacity to meet demand is a complex, multi-year process involving significant regulatory, technical, and construction challenges,” wrote Nvidia.

“In addition, access to capital can be particularly constrained for less-capitalized companies, which may face difficulties securing financing for large-scale infrastructure projects,” Nvidia added.

5. An OpenAI deal may finally be ‘close’

Huang addressed the company’s growing slate of strategic investments, including a deal with OpenAI, as questions mount over whether Nvidia’s strategy creates circular relationships with its own customers.

Speaking about Nvidia’s investments in AI companies such as Anthropic and OpenAI, Huang said the strategy is centered on strengthening the broader AI ecosystem and ensuring the next generation of software and hardware is built on Nvidia’s platform, from large language models to robotics.

“We want to take the great opportunity that we have, as we’re in the beginning of this new computing era,” Huang said.

Huang confirmed that Nvidia is “close” to finalizing a deal with OpenAI. The partnership was first outlined in 2025 as part of a massive AI infrastructure initiative that could reach $100 billion.