At Meta, there’s no escaping AI.

The company has begun running intensive AI training weeks to encourage staff to experiment more with AI tools, according to Meta employees who spoke with Business Insider and public LinkedIn posts.

The weeks have involved a series of hackathons, demos, and other projects where Meta staff show off what they can build with AI, regardless of their job title or seniority. Some of the projects are built with Anthropic’s Claude Code, which the company has adopted widely internally.

This is part of Meta’s latest initiative to embrace AI across its workforce, which has included setting org targets for AI adoption and reorganizing some teams around AI-native “pods.” Similar pushes are taking place across corporate America as companies aim to become more efficient with AI. Google has told some employees their AI use will be considered in performance reviews, and JPMorgan has told software engineers it expects them to be harnessing AI to save time.

“It’s well-known that this is a priority and we’re focused on using AI to help employees with their day-to-day work,” a Meta spokesperson told Business Insider.

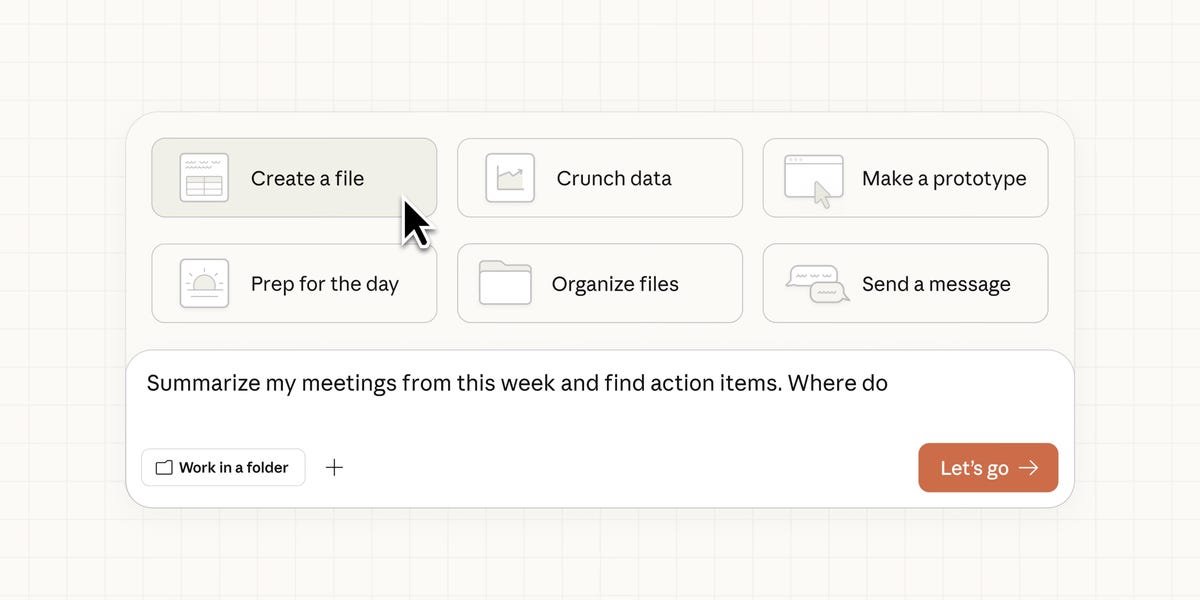

Internally, these sessions have been given names such as “AI Transformation Week.” During the sessions, some employees were given demos on how agents and other tools could work across their laptops and phones, an employee who attended some sessions told Business Insider.

Some of these AI weeks took place in March. One Meta employee told Business Insider that some teams held their own AI weeks at the end of last year, during which staff used vibe coding to create something valuable with no strict output requirements.

At one hackathon during Meta’s AI Transformation Week, attendees sat through demos of its own internal AI tools, Claude Code, and others, according to a LinkedIn post from an employee. AI agents are a big focus, with the aim of having employees guide autonomous systems that can handle everything from coding to compiling reports.

Design is also part of the effort. One Meta product manager touted building an interactive vibe-coding guide for designing products at Meta using Claude Code, according to her website.

Pods and goals

While some employees were brushing up on AI this week, Meta laid off several hundred employees across Reality Labs, the division overseeing its virtual reality projects, and other orgs. The company has spent billions on hiring top AI talent and building out infrastructure. However, it has yet to launch its long-awaited frontier model, internally codenamed Avocado.

While that has given Meta the perception of being behind in the AI race, a top Wall Street analyst said earlier this month that the company’s aggressive internal AI transformation could, in fact, give it “insurmountable” cost and performance advantages.

Meta has been making other changes in an effort to be what CEO Mark Zuckerberg has described as “AI native.” In a division of Reality Labs, the organization overseeing Meta’s virtual reality projects, employees were rebranded with titles like “AI builder” and were organized into AI-native “pods,” Business Insider previously reported.

The company has also set specific goals for adopting AI tools that vary across teams, according to an internal document reviewed by Business Insider.

On Tuesday, Meta’s CTO Andrew Bosworth said he would take leadership over the company’s efforts to adopt AI for internal use, an initiative known internally as “AI for Work,” according to a copy of the post seen by Business Insider and first reported by The Wall Street Journal.

“These tools hold the promise of giving each employee so much more power to accomplish their work,” Bosworth said in a post on X.