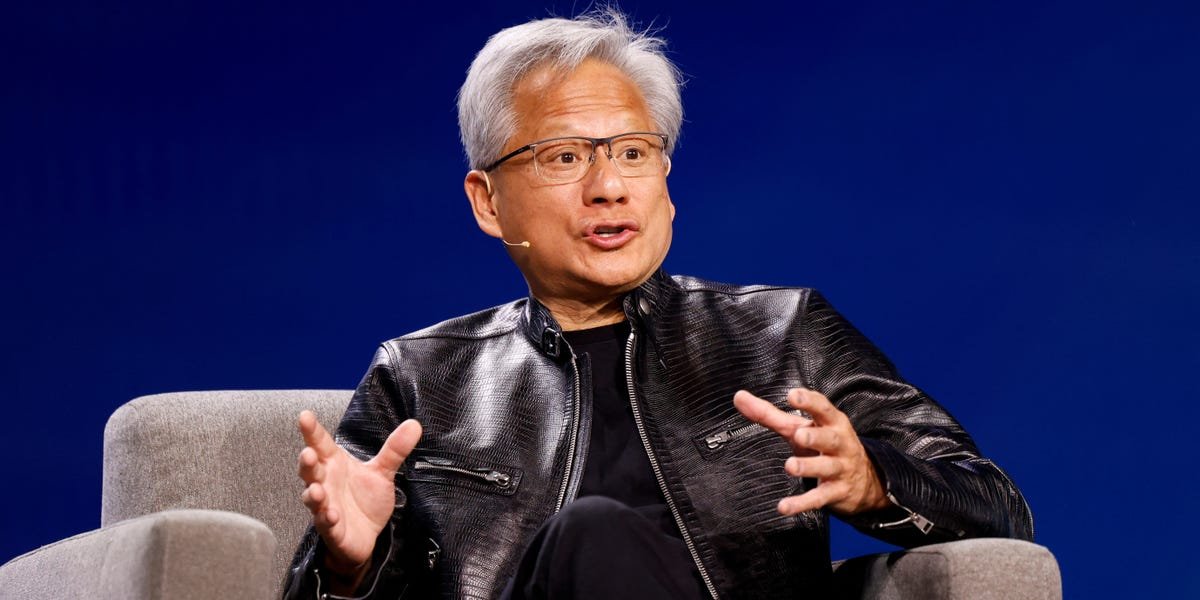

Nvidia’s GTC conference has become its biggest stage for outlining the future of AI.

The annual event increasingly attracts a broader crowd. At past gatherings, with Denny’s pop-ups and Taiwan-inspired night markets, Nvidia CEO Jensen Huang has unveiled sweeping product roadmaps for its GPUs and other AI chips. It’s also announced major pacts with tech giants and governments alike.

This year’s event comes on the heels of a blockbuster earnings report that barely nudged the company’s stock and raises questions about how long the AI spending boom can last.

Polymarket users are even wagering how many times Huang will utter phrases like “GPU” onstage.

Here’s what analysts and investors will be watching:

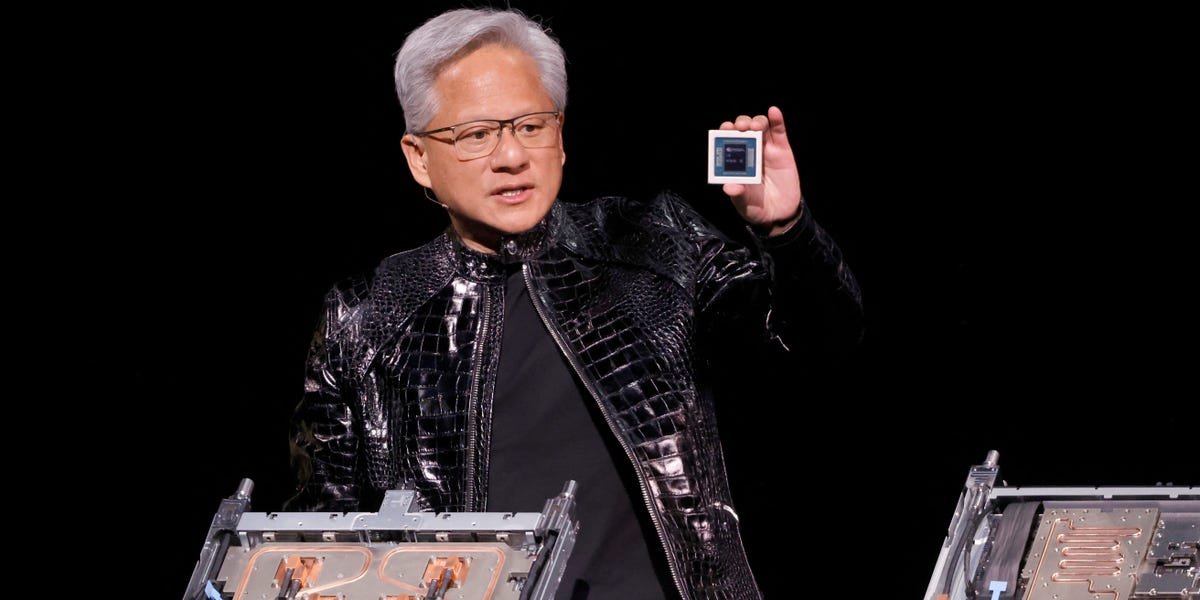

1. A new inference chip

Inference, or running trained models, is AI’s next act. Expect Nvidia to make a big statement as competitors — from cloud giants to a slew of chip startups — encroach on this space.

Huang previously teased “several new chips the world has never seen before,” and The Wall Street Journal reported in February that Nvidia is readying an inference-focused product incorporating technology from AI startup Groq, with OpenAI expected to be a key customer.

The chip’s design could have big supply chain implications. Inference relies heavily on memory, and with high bandwidth memory (HBM) in tight supply, investors will see whether Nvidia leans more on SRAM — a fast, on-chip memory used in inference designs — rather than solely relying on HBM.

Sid Sheth, founder and CEO of inference chip startup d-Matrix, said that while Nvidia will stay dominant in training, “inference is a different ballgame.”

He added that CUDA, Nvidia’s software that underpins most AI training and has locked developers into its ecosystem, is less of a moat in inference. Developers can turn to competitors other than Nvidia because running finished AI models doesn’t require the same kind of programming as training them, he said.

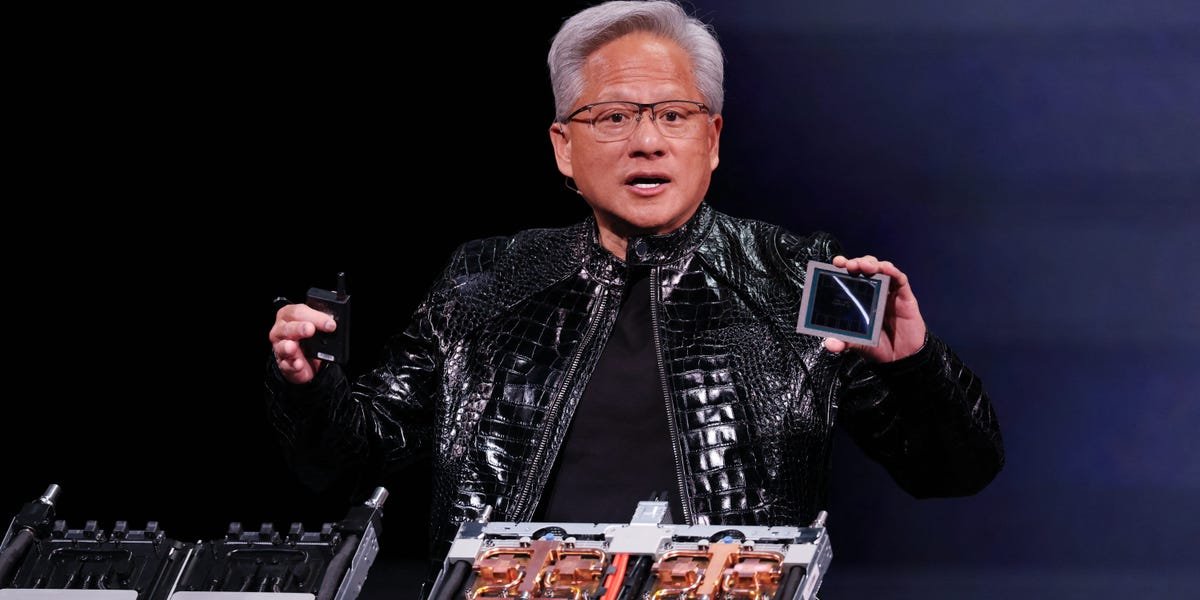

2. Life after Rubin

Nvidia has announced its next-generation Rubin Ultra systems. Rubin is expected to require far more power than past generations, and investors will eagerly see how Nvidia manages the transition and whether cloud giants will support it, said Sebastien Naji, a research analyst at William Blair.

Naji is also listening for what comes next: the Feynman generation. The big architectural breakthrough expected here is “copackaged optics,” or the use of light — not electricity — to move data between chips. This reduces power consumption and enables larger AI infrastructure clusters.

Earlier this month, Nvidia announced it secured multibillion-dollar supply agreements with optical component companies Coherent and Lumentum, signaling how central the technology could become in future systems.

3. Can agents and robots keep the AI Gold Rush alive?

As Nvidia matures, investors increasingly focus on durability rather than breakneck growth, said Brian Mulberry, chief market strategist at Zacks Investment Management.

Huang has emphasized agentic AI as the next driver of inference demand, a trend that recently reverberated across software stocks. Sheth, the d-Matrix CEO, says that’s only the beginning, with voice, video, and multimodal agents that have yet to show their potential.

“We haven’t even started,” he said of a forthcoming inference wave.

Robotics could add yet another layer, said Daniel Newman, CEO of The Futurum Group. Sometimes seen as a longer-term bet, he noted that Nvidia reported roughly $6 billion in robotics-related revenue last quarter and is predicting an “aggressive” timetable for humanoids.

4. The geopolitics of GPUs

Huang has entered the political fray at past GTCs, and the landscape is shifting rapidly.

Nvidia halted production of H200 chips for China and shifted capacity to its next-generation Rubin platform, The Financial Times reported. At the same time, the US is weighing export restrictions on AI chips that could turn it into a gatekeeper for international sales.

With China constrained, Newman said international markets are meaningful to Nvidia, pointing to massive AI infrastructure commitments in Saudi Arabia and the UAE — though conflicts in the Middle East have raised questions about sovereign demand, supply chains, energy costs, and the pace of data center buildouts.

In a world where AI is becoming a geopolitical tool, policy could shape Nvidia’s future as much as demand.

Have a tip? Contact this reporter via email at gweiss@businessinsider.com or Signal at @geoffweiss.25. Use a personal email address, a nonwork WiFi network, and a nonwork device; here’s our guide to sharing information securely.